Delivery Lead Time (DLT) is a software delivery metric popularised in the book Accelerate (2018). It measures the time it takes to ship changes for a given code base. I work on an Engineering Insights system at a BigTech company. Delivery Lead Time is one of the metrics we measure and provide for teams to use and drive improvement. Through my experience in developing and operating the Engineering Insights system I’ve learned a lot about Delivery Lead Time. Here I share my observations, best practices, and common misconceptions about the metric.

What is Delivery Lead Time?

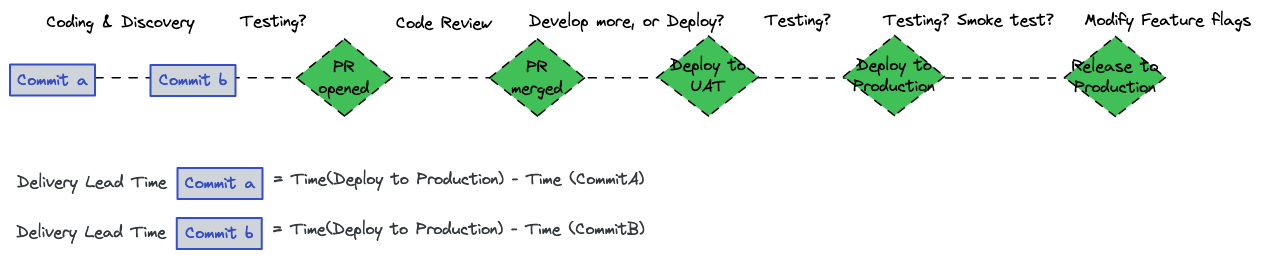

Delivery Lead Time measures how long it takes for code to get into production. In the Engineering Insights system I work on, DLT is recorded for every commit; measured from the time the commit was written through its deployment to production. This fine grained data is available for teams to investigate, but we provide an aggregated median DLT ‘hero metric’ that we recommend teams track over time. For the rest of this article when I refer to DLT I mean the Median DLT over a month of commits for a given project.

Here’s an example breakdown of the DLT for a team that follows the Pull Request workflow.

Delivery Lead Time

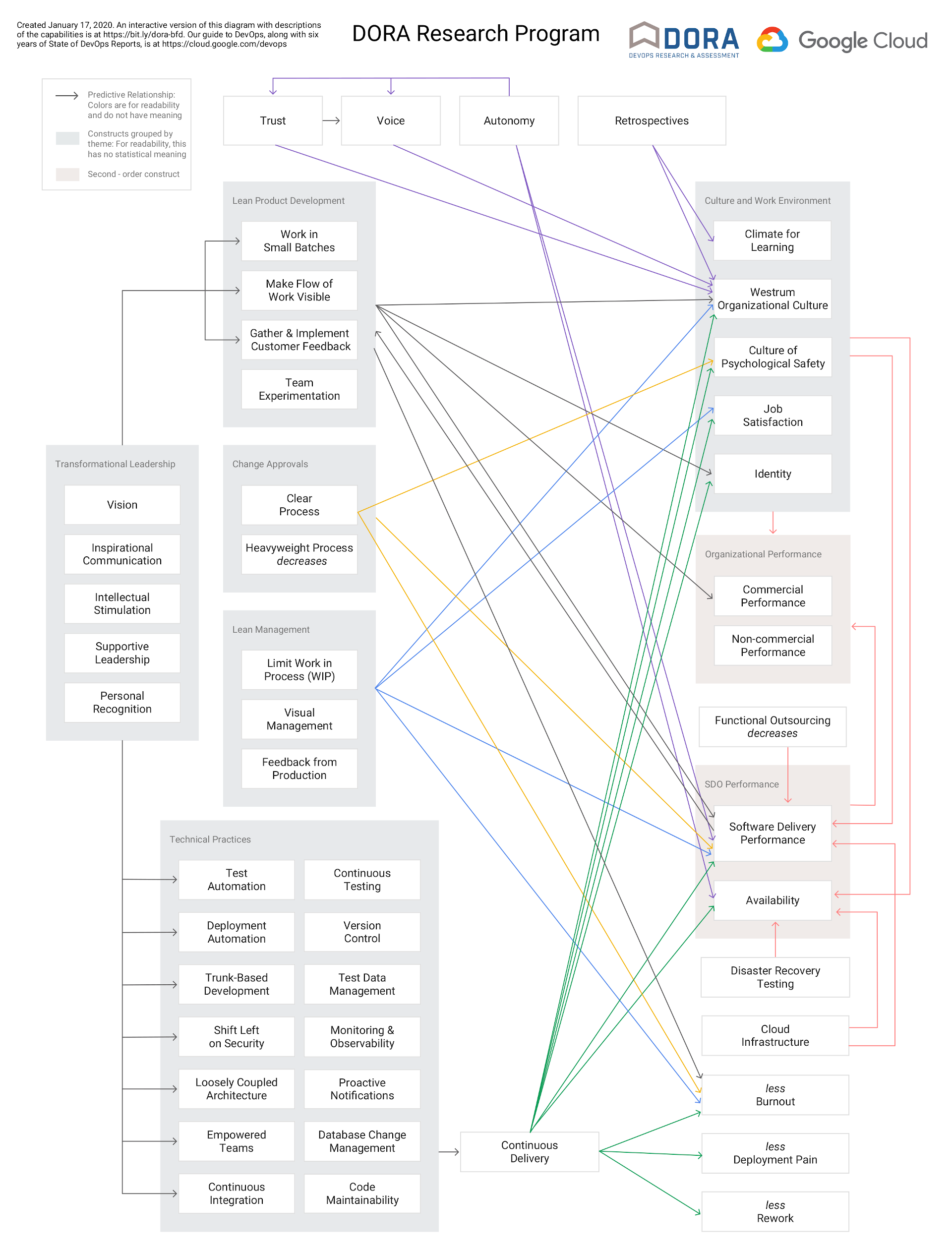

The specific workflow differs between teams. Fundamentally the workflow, and DLT, is determined by a project and team’s DevOps Capabilities. These DevOps Capabilities can be split into two groups:

Engineering Practices (cultural, social, organisational)

- Commit hygiene

- Pairing/Mobbing

- Code Review

- Trunk based development

Project Capabilities (technical, implementation based)

- Time it takes for tests to run

- Reliability of the tests

- Trust in automated tests, manual testing

- Use of UAT and staging environments

- Continuous Deployment

- Time it takes for the deployment pipeline to run

- Reliability of the deployment pipeline

- Observability and Monitoring

The DLT, as a single number, does not reveal which of these components are the largest contributors. It will differ for teams based on how they work, and the sorts of technology stacks that they’re operating on. The DLT is a starting point for teams to investigate further into where they can improve.

How to use Delivery Lead Time

Delivery Lead Time measures the software delivery capability of a project, it does not measure the software delivery performance of a team. DLT is determined by the set of DevOps Capabilities of a project and team. For older code bases that have lacked investment the state of the project can prevent a team from working in the way they want.

For example a team may work on a small section of an older monolith which takes hours to deploy, has flakey tests, and lacks automated tests; they have little influence over the wider project. Here DLT can be used as a starting point to quantify the cost of operating in the status quo, and make a case for the investment needed to improve the situation.

DORA DevOps Capability Model

What is a good Delivery Lead Time?

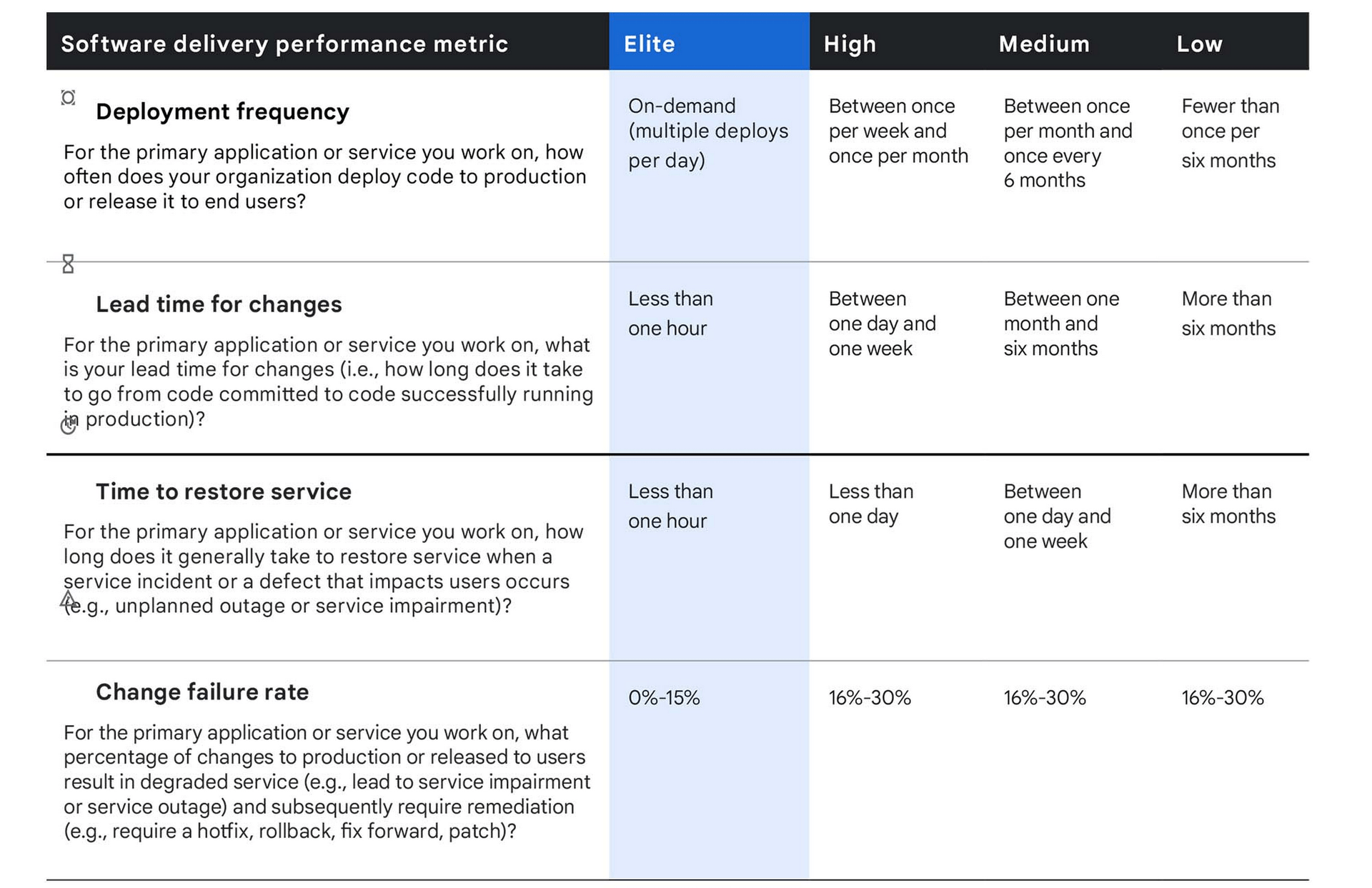

Metrics require a benchmark. Assuming the DLT is being measured for a project, how do we know if it’s good or not? The DORA report gives this breakdown:

DORA Performance Categories

What’s missing is the time scale it’s measured over.

High performing teams I’ve worked with and observed have many commits with DLTs less than one hour. During these sessions they’ve been pair programming and working on tasks that they can iterate quickly and immediately push their changes with no pull request, which are then continuously deployed to production - great! For these teams, when looking at their Median DLT over a month, it’s in the 3 - 12 hours range. Why is this? Not every commit is written during a pair programming session.

Time zones, schedules, energy levels, and the nature of the task all determine when to use pair programming. When not pair programming, they use the Pull Request workflow. This adds the time needed to author the PR, colleagues to review it, changes to be incorporated, and finally approved and merged.

In my view a Median DLT of less than 24 hours is excellent.

Taking the median DLT over a month’s worth of commits is important. Pull Requests can be submitted on a Friday afternoon and not reviewed until the next week, there are holidays, sick leave, and sometimes the team might be focused on discovery work instead of development. All of these are fine and healthy, and we don’t want them to have a disproportionate impact on the metric.

Another way of viewing the DORA ‘Less than one hour’ bracket is whether it’s possible to write code and have it running in production in less than an hour. From a data point of view this is “does the team have at least one commit where DLT is less than an hour?” This is relevant when we think about the time it takes for teams to recover from a bug or fault. In that circumstance they’d want to deploy a fix as fast as possible. I think this plausible time to recovery is better than the Mean Time to Restore metric which I discuss in depth in State of the DORA DevOps Metrics 2022.

How Teams can use Delivery Lead Time

Providing the DLT metric to teams and telling them to use it doesn’t work. Teams must begin from the starting point of wanting to improve their software delivery capabilities. Sometimes this is an easy and open conversation. Some weak signals I’ve noticed that can prompt the conversation are:

- Pull Requests are not quickly looked at. Team members need to repeatedly ping individuals or prompt at a standup for their PRs to be reviewed.

- Pull Requests are too large. The PR review takes days with many comments and changes requested.

- Many incidents are created due to change failures. Use this as a prompt to investigate if large changesets are being deployed, or if the test suite isn’t comprehensive.

- Incidents are created due to the deployment pipeline failing.

- Individuals not wanting to deploy the software; indicates the deployment process is time consuming.

- Only one person in the team does deployments. Indicates the deployment process is complex.

- Anything related to software delivery in a Retrospective.

With the team bought in on wanting to improve the software delivery process there are a couple of options:

- Pick the low hanging fruit, make small improvements everywhere in the process.

- Focus and develop a DevOps Capability.

The first is really tempting, and from what I’ve seen is most common. In backlog terms this might result in a bunch of ‘1 pointers’ which make minor improvements to all stages of the process: fix a set of flakey tests, upgrade the test framework so it runs faster, parallelise some of the steps in CI, add some more linting and validation steps to catch things at CI, etc. This is important work, but I view it as optimising for a local maxima. The team’s way of working is preserved, it does not evolve.

The second, developing a DevOps Capability, requires more investment but also results in a more significant day to day improvement for Engineers. Examples of this include moving from manual deployments to fully automated Continuous Deployment, the capability for Engineers to spin up Integration Environments on demand so they can test locally before pushing to CI, or a team switching to pair programming and trunk based development.

Whatever the method, set explicit objectives around the DevOps Capabilities and level of maturity that is being aimed for. DLT is a measure of progress (or regression) in implementing this work.

Don’t expect change overnight. While some of the DevOps Capabilities require technical implementations, it’s often a cultural change within the team that makes the most of them. Continuing an example above, if the team can now spin up Integration Environments on demand locally, they actually need to incorporate it into their workflow in order to receive the benefits of it.

Context Matters

Engineering Metrics can be used by management to identify codebases that need improvement. On the face of it, Delivery Lead Time seems like a strong signal – it represents the time it takes for engineers to get code into production. As with any metric, DLT simplifies and hides a lot of complexity. Below are some thoughts and learnings on how to consider DLT in the organisational context.

The type of deployable adds context to the DLT. Across my organisation we deploy APIs, background workers, SPAs, data applications, databases, and IaC repos. We deploy large legacy monoliths. We deploy small new microservices. As engineers we know these have very different software development and deployment realities. An ‘Elite DLT’ achievable by a Go microservice may never be achievable by a Database, no matter how much work is done.

Consider improvements to tooling which is shared between components in the software development and delivery process. This may be a shared testing server, or a common CI/CD pipeline in a polyrepo project which deploys multiple components. Improving this shared tooling will have a large impact on all components which use it.

What business value does a given project provide? In large organisations this question is sometimes difficult to answer. It’s better to invest and improve the software delivery capabilities of a high value project with a DLT of 48 hours than a low value project with a DLT of one week.

How often is code changed within a project? If a lot of engineers are working in it then even small improvements scale. Engineering metrics which reflect activity such as Deployment Frequency and Pull Request Count quantify this.

Prioritise removing toil instead of speed. Consider two components with identical DLTs. One has an extremely slow but reliable deployment pipeline - it takes a while but it’s completely automated. The other has a fast deployment pipeline which often breaks due to flakey tests - it requires babysitting by the deployer. Improving the latter pipeline saves on toil and makes engineer’s day to day better. See: Zero Cost Deployments.

Not all teams need to track the Delivery Lead Time metric. I’ve seen teams working on greenfields projects who have superb engineering practices. They practice pair programming and pure trunk based development, and their deployment pipeline is completely automated. This team doesn’t have any problems around software delivery. For them it would be a waste of time to set objectives to improve delivery practices or monitor the DLT.

Conclusion

Delivery Lead Time measures the time it takes for code to be deployed into production. It abstracts a lot of complexity, and so must always be treated carefully for a given team and project’s context. DLT does not measure team performance, nor does it measure engineering output or value delivered to customers. DLT measures how fast code can be changed in a given project. For a team, the objective isn’t to reduce DLT; objectives should be set around improving and developing new DevOps Capabilities. Delivery Lead Time should be used as a measure to track progress against the objective.

For more on DORA Metrics read State of the DORA DevOps Metrics in 2022.